Reimagining Social Media from the Margins

by Vaishali Soni

The Promise We Logged Into

Advertising. Agenda. Anxiety. Brainrot. Diabolical. Doomscrolling. Echo chambers. Polarising. Unavoidable. Voice.

In 2025, Point of View organised multi-city consultations across India — Goa, Bangalore, Mumbai, Delhi, and Hyderabad — with women, gender-diverse people, sex workers, people with disabilities, designers, technologists, and researchers to reimagine social media. These are the words that surfaced repeatedly. Some weigh heavier than others—unavoidable, anxiety, agenda, echo chambers, polarising. And somewhere in that accumulation, voice slips away, almost invisible beneath the weight of everything else. Together, these words tell a story—not of features or platforms, but of the visceral experience of inhabiting a space that millions of people depend on every day.

Social media was once sold to us as a dream — beautifully packaged with promises of connection, expression, creativity, and expansion. A space that encouraged us to move beyond our borders: geographical, social, and imaginative. At 13, I remember thinking: what does it mean to connect with someone across the seas? My world suddenly expanded beyond five school friends to include people I had never met—people with whom I could share dreams, ideas, hopes, feelings, and stories. Online, I could speak without being policed. I could share ideas without being silenced. I could explore parts of myself that had no space anywhere else.

At thirteen, the world suddenly felt closer — more accessible, almost endless. I could imagine so many possibilities.

At thirty-one, that same space feels constricting. What once invited exploration now produces a low, constant unease.

The Architecture of Harm

During our consultations, these harms were not abstract, but lived in the everyday

One participant from Mumbai shared:

“I wrote a critical article about a YouTuber posting political opinions. It was initially well received, but right-wing groups amplified the hate. The YouTuber filed a defamation case against me. I received rape threats, hired a lawyer, and filed FIRs myself. My organisation didn’t support me. I feel safer offline than online—I even wear a mask as a trauma response.”

Another participant said:

“After posting my views on Article 370, people I knew personally attacked me online. I was about to travel abroad and feared for my safety. Even friends and family couldn’t disagree civilly. I’ve stopped sharing opinions online — it feels useless. I’d rather focus on work on the ground.”

Participants from Bangalore spoke of images being misused on unsafe forums, of rape and death threats amplified by large accounts, and of being forced offline for their own safety.

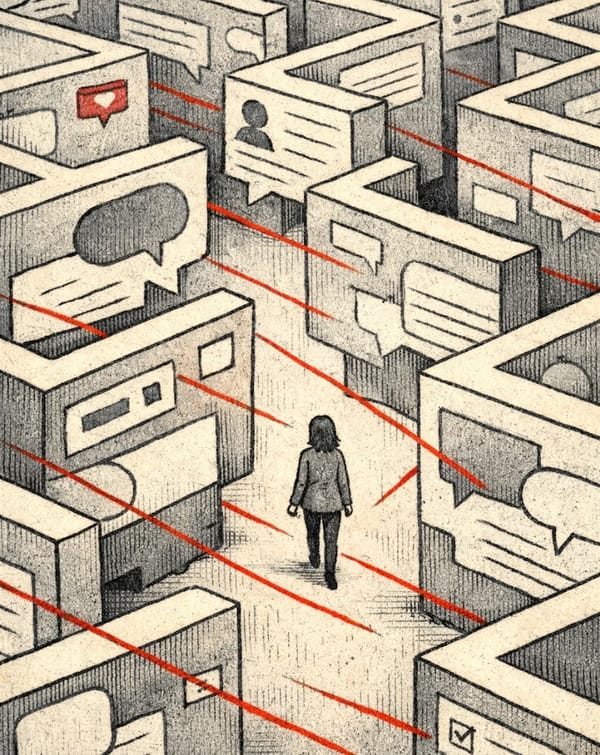

The boundary between the digital and the physical has collapsed. Online harm now carries offline consequences—fear, self-censorship, withdrawal, silence—while platforms continue to evade responsibility.

The question is no longer what this space allows us to do, but what it was designed to do—and for whom.

This shift was not accidental. It emerged from deliberate design choices shaped by profit, scale, and control. Social media platforms did not simply grow unwieldy over time; they were engineered to capture attention, extract data, and maximise engagement—often at the direct expense of care, safety, and user agency. What once felt like an open terrain of possibility has hardened into an architecture of surveillance, manipulation, and behavioural control.

Algorithms shift constantly, even as their logic remains opaque. Hate speech spreads rapidly, while tools meant to protect users — such as reporting, blocking, and consent—are often buried, ineffective, or ignored. No one knows how these systems function, for whom they work, or when they turn punitive. Consent to data collection, including deeply personal information, is mandatory rather than optional. Opting out of surveillance or the relentless, irrelevant barrage of ads is impossible.

Every few months, users are handed new manuals on how to “adapt” to the algorithm. The dynamic has quietly inverted: we are no longer using platforms; we are working for them. Forced links between apps, with no meaningful exit, turn participation into a cycle that feels increasingly suffocating.

Platforms are built on an extractive logic — bottomless feeds, constant interface changes, one-size-fits-all design. For people with disabilities or anyone who needs stability and control, this becomes a treadmill you cannot step off. Techno-feudalism traps users in cycles of managing harm, leaving little room to imagine alternatives.

Using Reimagination as a Political Act

If platforms were designed to extract, then redesigning them begins with reclaiming imagination. As filmmaker Alex Rivera puts it, “The battle over real power tomorrow begins with the struggle over who gets to dream today.”

Ruha Benjamin echoes this in Imagination: A Manifesto:

“Whether we turn to children playing in the sand or tech billionaires offering us solutions while they build underground bunkers to survive the climate emergency, it matters whose imaginations get to materialise as our shared future.”

These reminders matter because today’s platforms—their harms, hierarchies, and defaults—were chosen, not given. By placing imagination at the centre of technology, we reject opaque, extractive systems and begin to build our own futures. We decentralise. We experiment. We play—not as frivolity, but as resistance. A reminder that platforms must serve people, not the other way around.

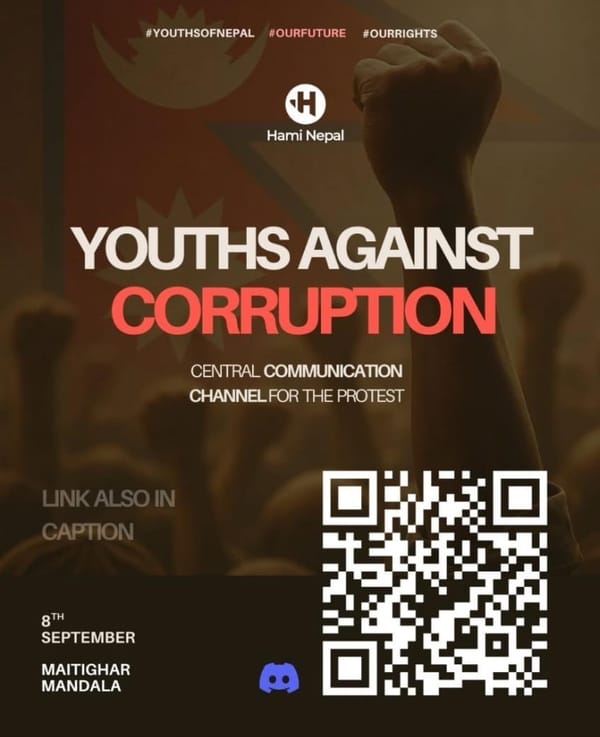

At our consultations across India, imagination met action through envisioning what people-centred alternatives could look like in practise

The goal was simple but radical: to co-create social media systems that centre lived experience rather than profit or scale.

From these conversations emerged prototypes that transform abstract demands into tangible solutions:

- Care-centred onboarding systems, guiding users through safety, consent, customisation and community norms rather than plunging them into opaque interfaces.

- Value-based data practices, allowing people to decide what they share, with whom, and how it is used—making consent meaningful rather than compulsory.

- Prototypes that centred safety by design, grounded in personal choice and collective accountability instead of opaque automated enforcement.

- Imaginative interaction tools, encouraging playful, creative engagement with terms and conditions while giving users control over visibility, reach, and exposure.

For example, instead of being pushed through default onboarding, users can actively shape their experience — choosing their cultural context, engaging with terms and conditions in accessible formats, and reviewing each category of data before deciding what to share or withhold. Control is built into the very first interaction, rather than hidden deep within the platform.

These prototypes demonstrate that platforms can be spaces of plurality, people-centred, and liberatory. Built from a non-Western, queer, feminist, and intergenerational lens, they challenge who sets defaults, whose needs are prioritised, and what values underpin digital spaces. The futures we inherit are not inevitable: the extractive, opaque, and harmful defaults of social media were intentionally chosen — and so too can alternatives be. Through imagination, co-creation, and lived experience, we can build platforms that serve people, not profits; communities, not algorithms. In reclaiming social media, we reclaim possibilities, voice, and agency.

Author Bio

Vaishali Soni is a multidisciplinary creative who blends storytelling, design, and art to explore themes of identity, human rights, and technology. With roots in journalism, she likes to don many hats - that of a writer, an illustrator, workshop facilitator, art-maker, and others. At Point of View, she works as a Design Specialist, managing their visual universe.