Designing a ‘safe’ digital future for Indonesia’s women activists and journalists

by Irma Garnesia

This essay is based on interviews with journalists, editors, lawyers, fact-checkers, activists, and digital rights advocates between May and August 2025 as part of the Australian National University’s ‘Information Abundance in Southeast Asia’ project. It contends that Indonesia’s current digital ecosystem systematically leaves women vulnerable because the legal protections, platform governance, and social media architectures mutually reinforce each other’s deficiencies.

The Harassment Engine: Informal Networks as De Facto Power

Kania’s (not their real name) 15 years of online advocacy for women’s rights and public policy issues forced her to understand Indonesia’s sprawling harassment ecosystems; political ‘buzzers’, conservative religious groups, and troll networks that mobilise quickly to silence women. Loosely organised, highly reactive actors come together in an informal architecture of power to cause reputational, psychological, and occasionally even physical harm to women activists and journalists.

When Kania criticised conservative Islamic leaders restricting women’s participation in public life she was met with organised, coordinated backlash: “I faced attacks from conservative Islamic organisations like FPI. They call me ‘liberal feminist,’ and likewise call everybody I am affiliated with a label.”

Over the years, she grew accustomed to the dogpiling of personal insults, often coupled with attempts to undermine her professional credibility. But a 2015 episode shifted the definition of harassment for her. A friend in Indonesia’s communication ministry discreetly told her that her tweet was being shared among a privileged group of police generals after she criticised President Joko Widodo for trivialising the severity of the Jambi forest fires through posts of his grandchild.

“I was afraid not of buzzers but because I am my family’s breadwinner,” Kania reflects now. “I can’t risk my own safety over a tweet.”

She withdrew from online spaces — a coping mechanism she still resorts to whenever a post goes viral. She also sees a therapist frequently to help cope with the anxiety born from years spent confronting digital violence.

Kania’s fear is neither exceptional nor excessive. A 2021 PR2Media survey of 1,256 female journalists found that 85.7 percent experienced violence, and 80 percent experienced online and in-person abuse alike. The Southeast Asia Freedom of Expression Network (SAFEnet) documents widespread cases of doxing, hacking, photo manipulation, gender-based slurs, and others; all of which mostly target female journalists and activists.

These incidents hardly ever happen in isolation. Instead, they’re part and parcel of coordinated political, cultural, and algorithmic networks. Buzzers, Kania cautions, are more than paid propagandists.

“Most of them feel like heroes doing national action,” she observes.

Their zeal for coordination tools like ideology and anonymity construct a righteousness so profound that harassment becomes a public service. Women who are targeted have little agency once they’re targeted.

“They gaslight and threaten you. They insist on ‘data’ or command you to prove your point. You can’t win,” says Kania.

The harassment engine works because it’s replicable and often amplified by the very design of platforms themselves.

Amplification without accountability

Social media design is the origin of much digital violence, and Indonesia’s harassment patterns, rooted in platform architecture, is evidence of this. Social media platforms, armed with algorithmic virality, effortless sharing, anonymity at scale, and flow across platforms, enable coordinated attacks and help them proliferate at a rapid pace.

Kania’s story reflects one of the wider patterns I learnt through our interviews: platforms offer more power to fringe actors than they do to victims. A fact checker we also interviewed, Nala (not their real name), came up with a striking example. A group of anti-vaccine advocates targeted her article by publishing her picture on Facebook groups and insulted her via her direct messages. Even after her formal reports, Meta refused to forward the case or clean up the content.

Nenden S. Arum, SAFEnet’s Executive Director, says that although platforms have reporting capabilities in place, they’re often inconsistent and disoriented. For example, for Arum, gender-based violence must be prioritised compared to hate speech or mis/disinformation, and the platform places it within the same category. Even when the gender based violence is directed towards activists and journalists, digital platforms refuse to recognise the urgency of such situation.

“They subcontract safety work, outsourcing it to civil society,” says Nenden. “And then go around acting as though they’re partners in protecting users in the Global South. But the burden falls on us.”

Platforms also rationalise inaction under the language of freedom of speech, particularly since global politics underwent a radical turn with the second Trump presidency. “They only respond to cases involving ‘imminent threats,’” Nenden says. “In effect, platforms have become enablers of gender-based violence.”

Another chronic failure is content moderation. In Southeast Asia, this failure goes hand-in-hand with cultural misalignment. Moderation teams, most of them external to the region or only with a single representative in the region, often wrestle with how Southeast Asian women understand modesty, shame, or exposure. SAFENet and KOMPAKS, a civil society coalition against sexual violence, often need to explain local context in detail before platforms act.

Yuri Muktia, coordinator at KOMPAKS, explains that when victims don’t speak English, reporting becomes even harder. Civil society groups are still calling for culturally sensitive systems and quicker pathways of escalation.

As Yuri points out, it’s often consistent with weak government oversight. “If our government doesn’t take digital safety seriously, neither will global tech companies.”

Protection without power

Indonesia’s formal legal system provides a patchwork of laws for dealing with digital harm, but these laws do not put the victims, especially women, at the forefront. The 2022 Law on the Crime of Sexual Violence (TPKS) was remarkable for recognising electronic-based sexual violence like the non-consensual sharing of intimate material and stalking online as crimes.

But as Siti Tardi from Indonesia Legal Resource Center, notes, policy development is slow. Police frequently don’t rely on TPKS provisions, arguing that it’s unclear whether content was initially consensual — a question that’s neither directly relevant nor assessable once the content is published online

Also lacking is the PDP Law (Personal Data Protection) which doesn’t classify women’s images or bodies as sensitive personal data.

On the other hand, the Electronic Information and Transactions Law (ITE) is still a problem. The law’s focus on information exchange and moral control has long criminalised victims rather than perpetrators. The ITE’s expansive defamation and nebulous harm clauses remain designed to silence women.

In short, Indonesia’s legal architecture acknowledges digital violence, but it offers no structure to empower victims. There are laws in place, but their enforcement is inconsistent and not always informed by the gendered nature of online harm.

Reimagining digital power

By 2026, Indonesia will face a digital landscape defined by AI-generated deepfakes and intricate influence operations, alongside growing gaps between the evolution of technology and the country’s slow moving legal implementation. The challenge is the imbalance of power in the architecture that dominates digital lives — a disparity disproportionately impacting women journalists and activists.

Indonesia must move away from a reactionary, individualistic perspective of safety to a structural one. Firstly, platform governance can no longer be contingent on voluntarism. Worldwide tech companies must be required to create localised moderation teams and open escalation channels for those facing gender-based harassment.

Safety must be baked into platforms. This includes friction mechanisms that slow down coordinated harassment; such as rate limits, reply restrictions, and traceability measures for mass-created accounts. CSOs can collaborate with tech companies to build platform-level safeguards and detection of harassment clusters before escalating, rather than afterwards. Indonesia’s legal framework also requires political will for its enforcement. TPKS Law must be implemented, PDP must designate women’s bodies as sensitive data, and the ITE Law must not criminalise the victim.

Indonesia’s digital safety future is uncertain but not decided. If we can change the design of our online spaces with intention, from a focus on accountability, cultural understanding, and gendered protection, then safety is not an aspiration, but a direction.

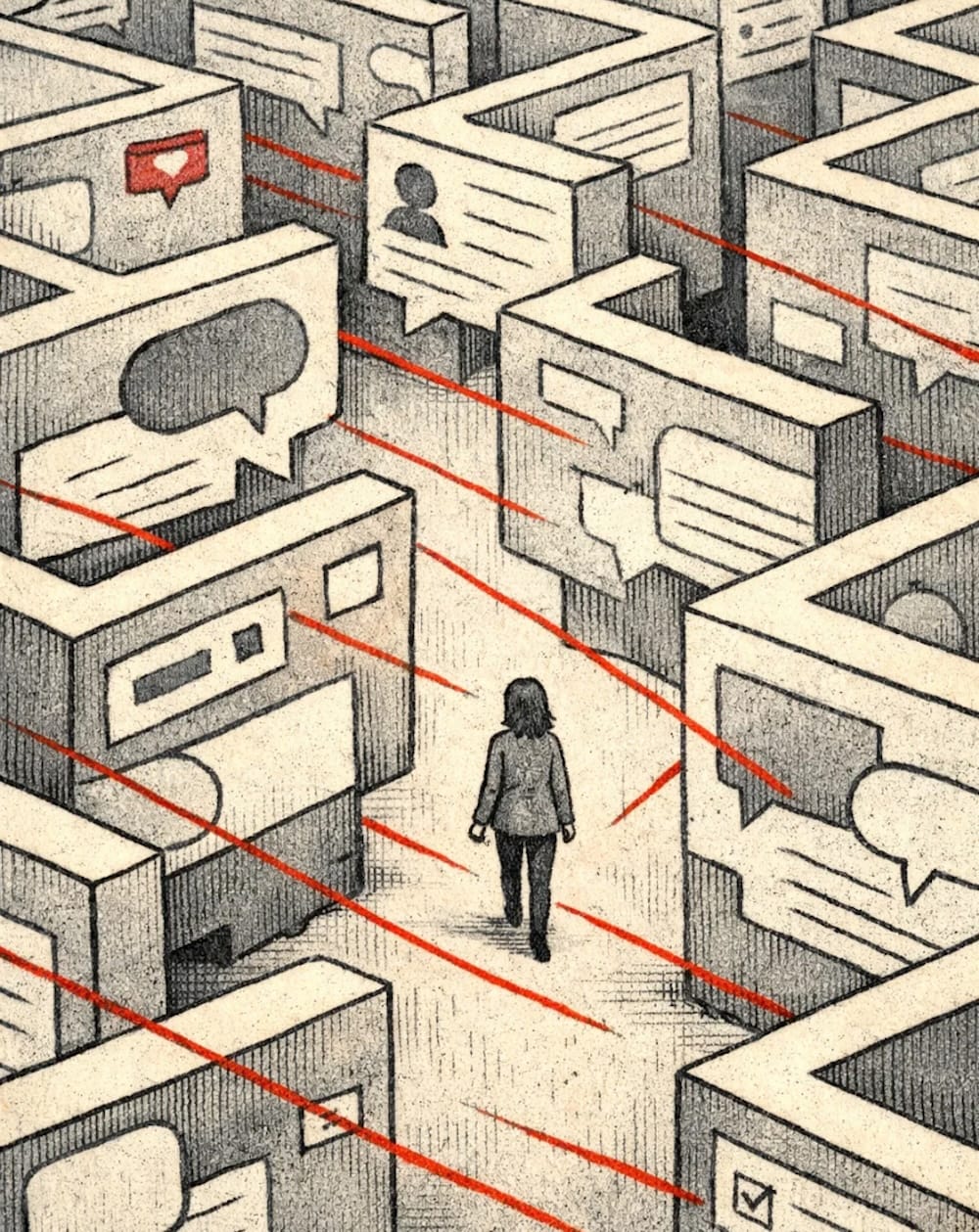

For women journalists and activists, Indonesia’s digital landscape is an architecture that spreads abuse. Faced with online environments driven by the combination of AI to manipulate the public and the coordinated behaviour of political buzzers, Indonesian women are now navigating the maze of institutional, legal, and platform failures.

As Indonesia looks ahead to 2026 and beyond, the inability to address these failures should be understood as a structural weakness, rather than placing the burden of digital safety on individuals. Online harassment is not the effect of polarised political culture or of small-scale misogynistic groups; it is the product of platform design, informal harassment networks, cultural blind spots in moderation, and a legal system that is still out of step with victims’ lived experiences.

Author Bio:

Irma Garnesia is a media and communication researcher based in Jakarta, Indonesia. Her research is focusing on the intersections of journalism, politics, and information ecosystems in Southeast Asia. Her work has appeared in outlets including the International Journal of Environmental Communication, Fulcrum ISEAS, and Global Voice.