Age assurance and the new face of surveillance

by Saritha Irugalbandara

The global push for age-verified social media use has accelerated since Australia introduced strict age-based access restrictions for under-16s using social media in December 2025. As per the Online Safety Amendment (Social media Minimum Age) Bill a year earlier, companies are mandated to take “reasonable steps” to prevent under-16 users from creating accounts through the use of age assurance technologies.

The policy choices that shaped the Australian law are several years in the making, with similar regulatory debate intensifying in the United Kingdom, India, Malaysia, and across the European Union. A Tech Policy Press mapping shows a global scatter of 42 countries with some level of consideration or implementation of age-based restrictions for social media use with these same issues at its heart. Overall, the urgency to protect children against child sexual abuse and exploitation material (CSAM) and ‘inappropriate’ and ‘violent imagery’ in some jurisdictions are central to the push.

Restriction-as-protection is not novel imagination. Age limits on cigarettes, alcohol, gambling, and visual media have existed for decades. Similar arguments on safeguarding children against an adult internet are brought forward by grassroots groups, parents, teachers with regularity. Datafication, encroachment on privacy rights, and repeated failures by major tech firms to enforce their own safeguarding policies have resulted in growing public restlessness about safety risks.

Lawmakers at different levels have echoed these anxieties. In Karnataka, India, a ban is proposed to “prevent the adverse effects of increasing mobile usage on children”. The Indonesian government has stated it is “stepping in so parents no longer have to fight alone against algorithms”, with implementation of a phased ban beginning at the end of March. At an International Fact-Checking Network event, Brazil’s most prominent Supreme Court Justice Alexandre de Moraes declared what many have grappled with for years: self-regulation has proven a failure. “We are putting tech companies on notice” wrote UK’s Keir Starmer, on tightening regulation as the Labour Government began a national consultation on age-restrictions.

Within this context, bans and restrictions become serious - if somewhat reactive - policy considerations, and the cross-regional appeal is considerable. A critical view of existing systems, norms around children’s rights, and the privatisation and ownership of data paints a complex regulatory challenge that requires more than retroactive consultations and new laws.

A brief note on rights of the child

Children’s rights frameworks often struggle to fully recognise children as individuals capable of independent thinking and choice. It sits uncomfortably with international standards of rights of the child: normatively speaking, there is very little unconditional acceptance of children’s individuality, their right to autonomy, privacy, and expression. As a result, safety continues to be determined within rigid and protectionist framings.

For victim-survivors of harm and abuse (children and adults), many protection processes involve further extraction of personal information, of sensationalising some forms of abuse as more serious than others. This is highly evident in the ongoing policy conversations. The harm that children face on social media is framed as exceptional. Imagery of sexual violence and lethal consequences of cyberbullying anchor the urgency with which proposals, laws, and technologies are deployed. Focusing narrowly on specific forms of online harm has also obscured the broader picture of how children are treated and impacted by technology: be it unequal access to technology, the unmitigated manosphere fostering violent misogyny in young boys, or weapons automation that continues to massacre and maim children in West Asia.

Hinging the regulatory debate on child safety against sexual crime has had other consequences, too, on censorship and freedom of expression. For example, the Australian regulation has language on “age-appropriate” content referring to pornography, framing it as inherently harmful, violent, or inappropriate. This ignores the many reasons young people may encounter or seek out pornography at different ages and stages of development. Adolescents, for example, may seek pornography due to curiosity, the lack of resources on intimacy and sexual behaviour, or for sexual arousal. It is common for adolescents to share intimate images as part of building intimate relationships. By and large this is age-appropriate behaviour. Safeguards against exposing non-consenting children to pornography should be implemented at a design-level, especially with regard to advertising tactics that use graphic imagery to produce revenue.

The Business of Age Assurance

Much has been written already of blanket bans’ impact on internet freedoms and the disempowerment of children, parents, and teachers in making informed choices about tech use. Research on device and social media use in children draw nuanced conclusions on how mental health, access to digital literacy skills, socioeconomic background, and the availability of adult guidance play a critical role in shaping a young person’s choices around their online behaviour, privacy, and expression. In practice, child safety has become a Trojan horse that allows policymakers to sidestep these complexities.

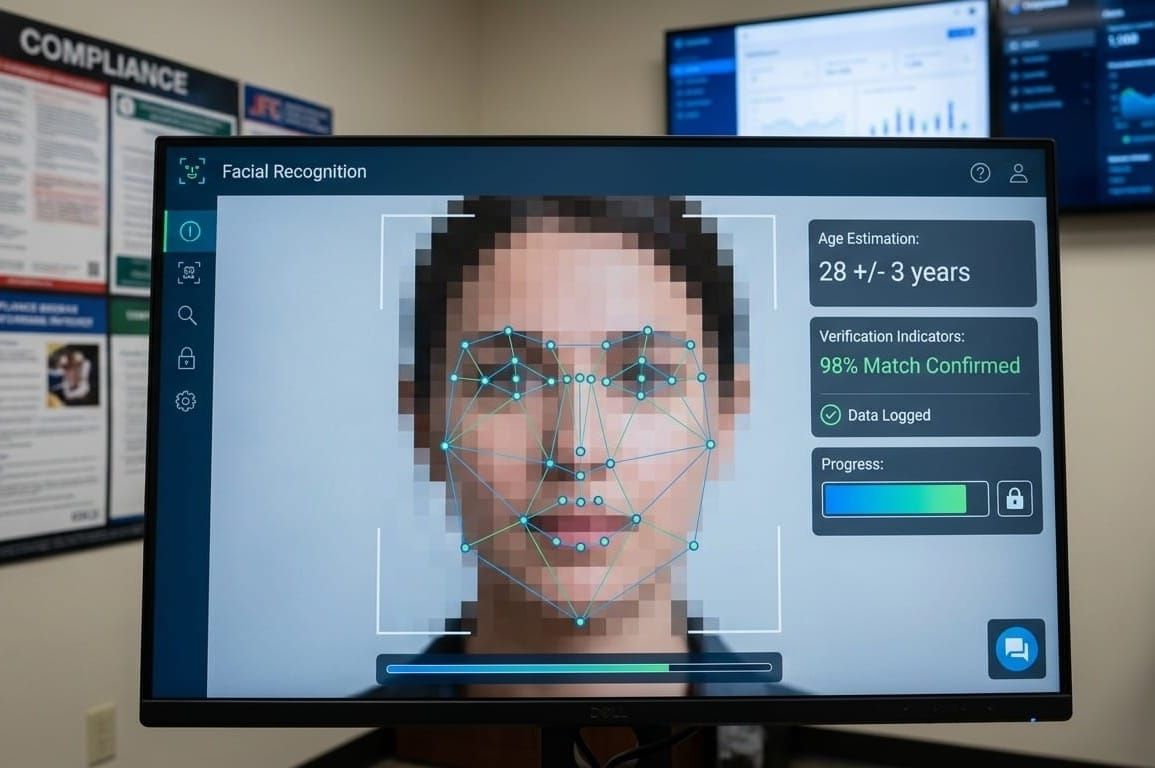

At the heart of this lies an entire industry of biometric vendors and digital ID solutions providers quietly reshaping how governments approach online safety. Companies like Yoti, for example, are fast developing in-device verification for EU markets, while others like Onfido have partnerships with Visa and Mastercard to support mandated age-checks. Both companies have enjoyed increased market value and profit margins and the age-assurance market is projected to become a multibillion-dollar industry by 2029. In Malaysia, major tech firms are engaged in a tug of war on platform vs. device level age signalling while government agencies are exploring age-verification methods ahead of a similar ban. Regional governments in India are considering similar measures, while a Kenyan proposal aims to mandate national ID verification for all social media users.

Despite being touted by industry bodies such as the Age Verification Providers Association as ways to make the internet “age-aware”, it is unlikely that over-reliance on technological ‘fixes’ will deliver on these promises. Research and testing on existing age assurance technology demonstrates limited accuracy, gender and racial biases, and errors in age estimation. Many rely on automated systems with little transparency around the data used to train them. The Australian rollout has reported cases of adults being misidentified as minors and being locked out of accounts. Error rates which are higher at ages closer to 16 are reported as “inevitable”, requiring other forms of ID checks to complete verification and mandating such requirements centralise and expose large caches of sensitive personal information to risks of breach. Ahead of the ban, children in Australia expressed ways of bypassing the age checks using VPNs, using AI software to appear older, or even using identification documents of an older sibling or parent.

As well as legislation on age-based restrictions, governments are attempting to undermine encryption in a myriad of ways. Suggestions for CSAM-prevention include “chat control”, mandating companies to scan all communications for inappropriate or violating contents at a platform level. It includes possible applications to platforms such as Signal and WhatsApp that use end-to-end encryption (E2EE). Client-side scanning which is presented as a middle-ground by UK legislators still undermines E2EE and effectively turns devices into surveillance points. Mounting pressure to tackle child predators has presented governments an opportunity to dismantle privacy infrastructures and build further inroads for the surveillance architecture.

In the underbelly of the Trojan Horse

Overall, as compliance tech cashes in, governments rush in policies that subvert basic principles of data privacy, data minimisation, and the right to anonymity. And in many ways, age-assurance technology is closely linked to the expansion of digital ID systems. In practice, many age verification methods rely on identity documents, biometric data, or third-party verification services. This creates a pathway where systems designed to check age begin to depend on, and reinforce, broader identity infrastructures. Over time, what begins as a narrow safety measure can expand into more generalised forms of identification and data collection across digital services.

The flurry of social media laws in the last few years entrenched restrictive measures as necessities. Disinformation and hate speech became convoluted arguments to dismantle anonymity. In many jurisdictions, safe harbour provisions which would protect companies from criminal liability for user-generated content were actively sought (and approved) while levelling criminal penalties on end-users. Here, too, “protecting women and children” was propped up as a policy priority, although organisations working directly with victim-survivors report little change in the frequency or material conditions causing TFGBV and CSAM. The weaponisation of criminal legal instruments around social disharmony, public health, and freedom of expression intensified during the Covid 19 pandemic. Soft-surveillance was mainstreamed as a preventative measure. Digital identification policies were rolled out shortly after, in part enabled by the normalisation of social control implemented under the guise of public good. Under the banner Digital Public Infrastructure (DPI), tech evangelists like Bill Gates and Larry Ellison preach of digital identification systems as a great equalizer . Despite mounting dissent from privacy rights groups, several countries are considering adopting at least some layer of digitalisation without sufficient consultation. India’s Aadhar continues to be hailed a success story by industry at large despite manifest issues of service exclusion and discriminatory application. Sri Lanka is taking notes from its neighbor. The UK is attempting to revive a 2006 digital ID scheme for ‘right to work’ verification amidst concerns over surveillance expansion. South Korea is hatching efforts to bring back its ‘real name’ identity system for mobile phone use, a policy that was struck down as unconstitutional back in 2012.

It is not a coincidence that many of the leading voices supporting and offering age assurance “solutions” are, primarily, experts in digital ID. It is also not a coincidence that children - people in this world with the least autonomy and choice - form a central and emotional tenet in this global push funded and captured by private interests.

This does not suggest that all actors are aligned or operating with the same intent. Governments, technology companies, and solution providers often have different priorities and constraints. However, their incentives can converge in ways that favour greater age- data collection, verification, and control. The result is a shared trajectory, where systems introduced for safety or compliance purposes gradually expand the scope and scale of digital identification and monitoring.

The combined push for DPI and age-verification intensifies private ownership of personal data, facilitated through policy choices that mandate mass data collection. The new proposals around social media use are primed to essentialise “accurate” identification.

As far as policy traps go, age assurance garners more sympathy and support than many other proposals. And although it does little to challenge industry standards and business models, the uncritical buy-in makes it all the more serious for privacy, autonomy, and expression on the internet. Evidence-based scrutiny of invasive data collection, algorithms, and platform business models, and deteriorating privacy standards across major digital platforms must be prioritised by policymakers if they are to operate in good faith. Child rights advocates have insisted on developing positive, child-friendly spaces and experiences to support intellectual and social development as part of their digital exposure. Investments in building these systems are crucial and as outlined earlier in this article, we must reckon with the big-picture of children’s rights and freedoms. Regulators, grassroots groups, technologists, and digital rights advocates can no longer afford to work in siloes. All it has done so far is allow private profit to shape our access to technology, while entrenched issues around safety continue to multiply. Compromising on privacy today will shape the internet that today’s children inherit tomorrow.

Author Bio

Saritha Irugalbandara is a queer feminist researcher from Sri Lanka. Their research and advocacy focuses mainly on gender, technology, expression, and accountability through an intersectional feminist lens. Their advocacy and organising has also focused on freedom of expression, civic participation and bodily autonomy of politically marginalised people, and building community-centric justice and accountability systems in the global south.

Post image generated by Gemini